One very useful takeaway from this discussion for me is this: the real question may no longer be whether ODA / AN already contains many of the necessary ingredients - because clearly, a lot of them are already emerging.

The harder question is whether those ingredients are enough to preserve coherence once autonomy becomes increasingly distributed, dynamic, and eventually federated across boundaries.

Mark's point about viable systems was especially helpful here, because it reframes the issue beautifully: How does distributed autonomy remain coherent without falling back into central control?

And what is interesting is that some recent work - including TM Forum conflict-management patterns and emerging 6G reasoning-native agent communication - seems to be circling around the same issue.

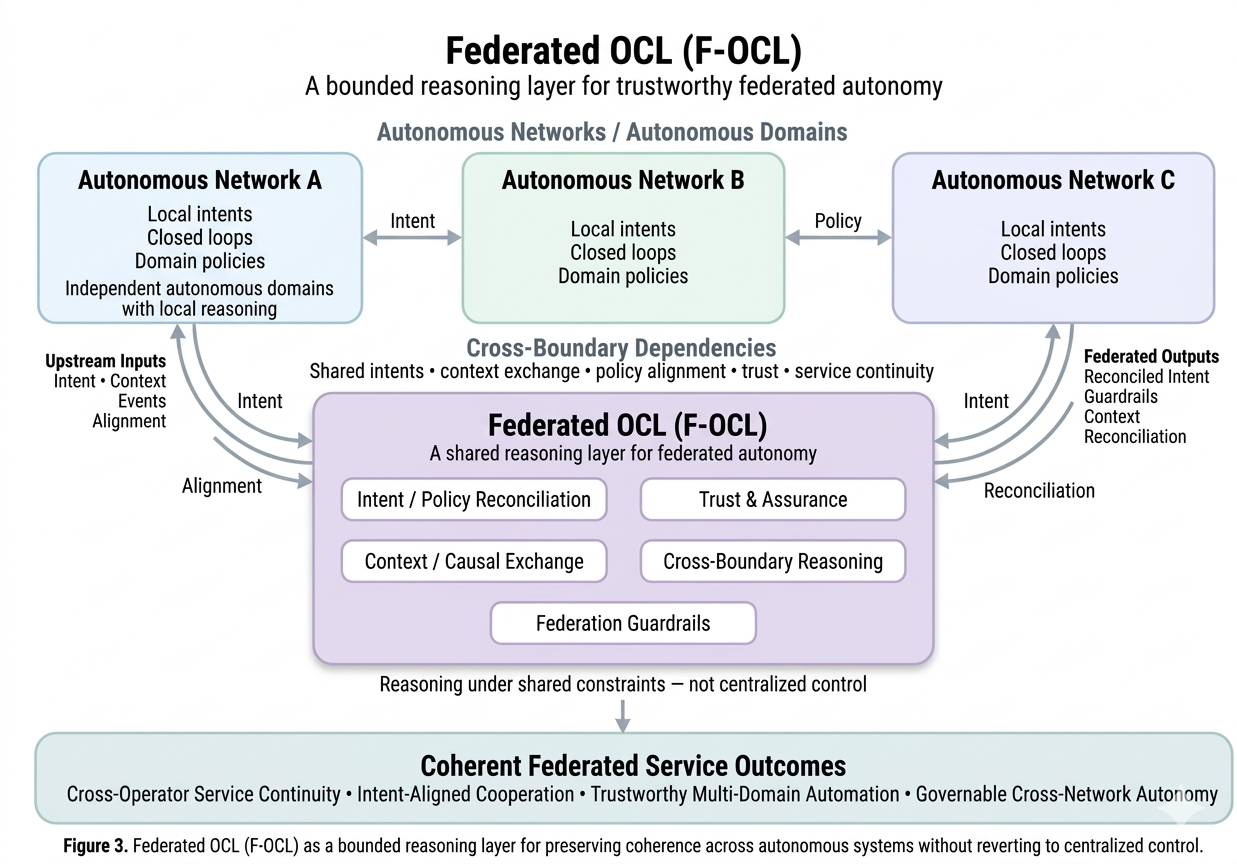

That is what led me to think one step further: not just OCL inside one operational environment, but Federated OCL (F-OCL) as a bounded reasoning layer for trustworthy federated autonomy.

That is what I am trying to explore in Post #3.

From OCL to Federated OCL: What Makes Cross-Network Autonomy Trustworthy?

In the first two posts, I argued that Autonomous Networks may need something more than local closed-loop intelligence to remain coherent at system scale.

To visualize how F-OCL operates, I often look at two real-world models:

⦁ The Human Nervous System: Think of domain closed loops as our autonomic nervous system (managing heart rate and digestion automatically). OCL acts as the central nervous system, providing higher-level supervision to ensure these functions align with the body's overall activity (like running or resting) without micromanaging them.

⦁ Peer-to-Peer Professional Collaboration: When two experts from different organizations work together, they don't necessarily need a common boss. Instead, they share context, negotiate trade-offs based on their respective constraints, and reach a consensus to achieve a shared goal. F-OCL is the framework that enables this distributed reasoning between autonomous networks.

These analogies help clarify that OCL is not a 'super-controller' but a functional layer for operational alignment and systemic judgment.

That led me to the idea of an Operational Cognition Layer (OCL) - not as a central controller, but as a way to make system-level operational reasoning more explicit when multiple autonomous loops, intents, and policies begin to interact.

But once we move beyond a single domain - or even a single operator boundary - the challenge becomes much harder. At that point, the question is no longer only: How do autonomous loops avoid conflicting with each other?

It becomes: How much explicit system-level cognition is needed to make federated autonomy trustworthy?

Why this becomes harder across boundaries. Inside a single autonomous environment, coherence is already difficult. Across multiple environments, it becomes even more complex. Because now we are no longer dealing only with competing intents, local optimization conflicts or policy trade-offs within one operational scope.

We are dealing with federated autonomy across different domains, different vendors, different operational stacks, different trust models and sometimes even different organizations. At that point, simple coordination is not enough.

Even if domains can exchange intents, events, telemetry or agent messages, that does not automatically mean they will remain operationally coherent. Because autonomy can still diverge.

The deeper problem: not only information exchange, but reasoning divergence. This is where I think the discussion becomes especially interesting. A future autonomous environment may allow domains or agents to exchange state, context, goals and policy signals. But even when they share information correctly, they may still reason differently about what should happen next. That means the problem is not only state exchange. It is also reasoning divergence. Two autonomous domains may both be "correct" in their own local logic - and still become collectively unstable when their decisions interact over time.

This is why I increasingly think the real challenge is not just automation, but coherence under distributed reasoning. And that is exactly where I see the need for a more explicit Federated Operational Cognition Layer (F-OCL).

To me, F-OCL is not a super-controller, a central intelligence engine or a replacement for distributed autonomy. It is better understood as a bounded reasoning layer for federated coherence. Its role would not be to command every domain. Its role would be to help preserve cross-boundary operational alignment when autonomous systems need to interact, negotiate, or adapt without losing trust, stability, or service intent.

In that sense, F-OCL is not about removing autonomy. It is about making autonomy safe to interconnect.

One thing I find increasingly important is this: Intent exchange is necessary - but not sufficient. Because once multiple autonomous domains are operating simultaneously, the challenge is no longer just: "Can they express what they want?". It becomes: "Can they reason through what happens when their intentions collide?"

That requires more than syntax. It requires some shared ability to evaluate: cross-domain side effects, trade-off implications, trust boundaries, policy constraints and service impact across operational scopes.

This is why I do not see F-OCL as "more orchestration." I see it as an architectural response to a harder problem: How do we preserve coherence when autonomy is distributed, dynamic, and only partially visible across boundaries?

If something like F-OCL is to be useful in practice, I think it would need at least five capabilities:

1) Context that is reason-able, not just observable:

Not just telemetry. But a structured operational context that can represent: dependencies, service state, policy state, cross-domain relationships and potentially even temporal or causal impact. Because raw data alone does not support system-level reasoning.

2) Trade-off reasoning with an explicit decision basis:

This is critical. F-OCL should not "invent" priorities on its own.

It should reason under explicit governance constraints such as: business intent, policy hierarchy, SLA criticality, service class, trust boundaries, operational risk tolerance and regulatory conditions. In other words, F-OCL should reason under policy, not outside policy. That is what makes it governable.

3) Cross-boundary trust and bounded autonomy:

Not every domain can expose everything. And not every autonomous decision should be freely delegated across organizational or vendor boundaries. So federated autonomy needs clear limits around what can be shared, what can be negotiated, what can be inferred and what must remain local. That makes trust not just a security issue - but an architectural one.

4) Reconciliation, not central override:

If two autonomous domains reach incompatible conclusions, the answer should not always be: "send everything to a central brain." Instead, F-OCL should support bounded reconciliation, selective escalation, policy-aware negotiation and system-level alignment without destroying local autonomy. That, to me, is the real architectural balance.

5) Post-execution validation:

One thing I think is especially important for trustworthy autonomy: The system should not only decide. It should also learn whether its reconciliation was actually correct. That means comparing: predicted impact vs observed outcome over time.

Without that, "reasoning" risks becoming guesswork. With it, federated cognition can become progressively more reliable. Where I think this connects with current TM Forum work.

What makes this especially interesting to me is that many of the building blocks for something like F-OCL may already be emerging - even if under different names.

Recent TM Forum work around intent-driven operations, intent ontology, closed-loop coordination, agent interaction patterns, ODA Canvas and conflict management seems to point in a very similar direction: not toward a centralized autonomous brain, but toward a more structured way of making distributed autonomy interoperable and governable.

That is why I increasingly do not see OCL / F-OCL as "something outside ODA." I see it more as an attempt to make an already emerging coherence function more explicit.

So perhaps the real architectural challenge is not: How do we make each domain more autonomous? but rather: How do we make distributed autonomy coherent enough to be trusted at scale - especially across boundaries? If local autonomy is the engine of Autonomous Networks, then perhaps federated operational cognition is what prevents that engine from pulling the system apart.

So I would be very interested in the community's view: Is intent exchange and policy alignment enough to make federated autonomy trustworthy? Or do we eventually need a more explicit cross-boundary reasoning layer to preserve coherence across autonomous systems?

And if that function is already emerging inside ODA / AN architecture - should we begin naming it more explicitly?

Figure 3. Federated OCL (F-OCL) as a bounded reasoning layer for trustworthy federated autonomy

#DigitalTransformationMaturity

------------------------------

Ngoc Linh Nguyen

Vietnam Posts and Telecommunications Group (VNPT)

------------------------------