As Autonomous Networks move toward higher levels of autonomy, a practical paradox becomes increasingly visible:

The more autonomy we distribute, the harder system coherence becomes.

A lot of current discussion rightly focuses on closed loops, domain autonomy, AI agents, and intent-driven automation.

But in real operations, autonomy does not happen in isolation.

Different domains may optimize for different things:

- network performance

- service assurance

- customer experience

- cost efficiency

- energy saving

- regulatory compliance

Each of these may be valid locally.

But at system level, they do not automatically align.

That creates what I see as one of the most important architecture questions for Autonomous Networks:

When multiple autonomous domains are acting at the same time, what ensures that the system still behaves as one operational whole?

By "system coherence", I do not mean rigid central control.

I mean the ability of multiple autonomous domains, loops, and decision functions to operate as part of a consistent whole - where local optimization does not undermine service intent, governance, or end-to-end operational behavior.

In other words, different autonomous functions may optimize locally, but the network should still behave coherently at service and operational level.

The practical risk: autonomy silos

As we move toward L4/L5 autonomy, I think there is a real risk of what might be called autonomy silos.

A domain may become highly autonomous within its own boundary, yet still contribute to fragmentation at system level if there is no shared mechanism for reasoning across operational objectives.

For example, a RAN domain may optimize for energy saving by powering down resources, while another domain is preparing for a burst of latency-sensitive traffic.

Both actions may be locally valid - but without a shared operational reasoning layer, they can easily become systemically misaligned.

This is where I think the industry may eventually need to look beyond automation itself, and ask a harder question:

How is coherence maintained when autonomy is distributed?

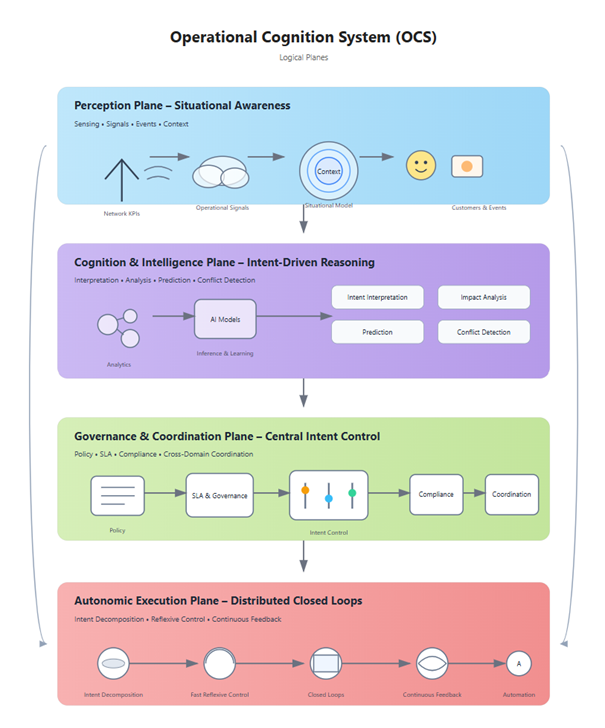

A possible way to think about this: Operational Cognition System (OCS)

One way I have been thinking about this problem is through the idea of an Operational Cognition System (OCS).

Not as a product.

Not as a single centralized "brain".

And not as a replacement for distributed autonomy.

But rather as a logical operational layer that helps connect:

- Contextual Awareness → what the system is sensing and understanding

- Cognition → how signals, intent, and trade-offs are interpreted

- Governance → how policy, constraints, and priorities are applied

- Execution → how autonomous actions are carried out across domains

The core idea is simple:

Autonomy may be distributed, but coherence may still need to be architected.

One way to view OCS is not as a replacement for intent management or closed-loop automation, but as the reasoning and coherence layer between them - especially when multiple autonomous domains interpret or optimize against competing intents.

For example:

- Energy Saving

- Low Latency

- SLA Assurance

- Operational Risk

- Cost Efficiency

may all be valid intents - but not always simultaneously satisfiable in the same way.

That suggests a need not only for automation, but for cognitive reconciliation at system level.

Figure 1. Operational Cognition System (OCS) – conceptual logical planes for system-level coherence in Autonomous Networks.

The figure illustrates OCS as a logical operational layer that connects contextual awareness, intent-driven reasoning, governance, and distributed autonomous execution.

Its role is not to centralize all intelligence, but to provide the minimal architectural structure required to maintain coherence across increasingly autonomous operational domains.

Why this may matter in practice

In real operational environments, we increasingly see situations where:

- multiple closed loops exist across domains

- local optimization objectives may conflict

- intent must be interpreted differently at different layers

- governance and policy need to remain consistent across distributed automation

In those situations, the problem is not simply:

"Can we automate more?"

The harder question may be:

"Can increasing autonomy remain operationally coherent at scale?"

That is where I believe an OCS-type architectural construct may be useful - whether implemented explicitly, or emerging through a combination of intent models, policy layers, shared cognition, and coordination mechanisms.

Open question to the community

I would be very interested in how others see this.

Should coherence in Autonomous Networks emerge primarily from distributed domain interaction under shared constraints?

Or does it require a more explicit architectural layer for system-level reasoning and alignment?

I would especially value perspectives on whether this kind of function already exists - implicitly or explicitly - in current AN / OSS / ODA-aligned architectures, even if under different names.

#DigitalTransformationMaturity------------------------------

Ngoc Linh Nguyen

Vietnam Posts and Telecommunications Group (VNPT)

------------------------------