Original Message:

Sent: Apr 10, 2026 01:50

From: Alexey Rusakov

Subject: When Closed Loops Collide: Is Intent Exchange Enough to Keep Autonomous Networks Coherent?

Hi Ngoc. I believe that in a reasonably short term (6G-ish) we're still going to see the mainly-hierarchical approach to this - meaning, end-to-end orchestration/closed loop engines (E2E closed loop is already a part of TM Forum RA) will do the coordination based on the existing policy definitions and aggregate metrics. The trade-offs between these will be reconciled basing on higher-level policies and intents but these will remain largely organised in a top-down structure, however fluid and fuzzy. If you wish to call it "cognition" - sure; but I wouldn't expect a lot of actual cognition in that layer.

My reasoning stems from the same Viable System Model that you've been referred to already in another thread (I'm a big fan of VSM); in its terms, this would be System 4/System 5 kind of entity. But also the Conway's law: the way telco organisations operate will continue to shape the way software (and agents in particular) is organised. I haven't seen many successful escapes from Conway's law in telco over the 30 years I'm following the industry, and I'm not expecting autonomous systems to escape it either. As long as someone in the organisation "owns" the network in general (CNO?) they will want/need to see a high-level "picture" and make some "decisions", and therefore will need the supporting software system for that.

------------------------------

Alexey Rusakov

Red Hat, Inc.

Original Message:

Sent: Apr 08, 2026 02:48

From: Ngoc Linh Nguyen

Subject: When Closed Loops Collide: Is Intent Exchange Enough to Keep Autonomous Networks Coherent?

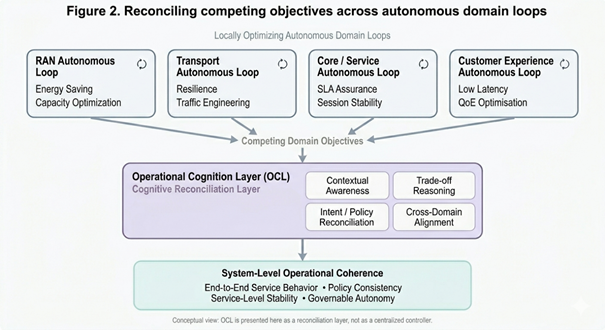

A closed loop can optimize a domain. But who decides what should happen when multiple closed loops are all correct locally - and still incompatible systemically? To me, this is where the Autonomous Networks conversation becomes truly architectural.

Much of today's AN work is rightly focused on making domains more autonomous through intent-driven operations, AI-enabled decisioning, closed-loop automation, policy-constrained coordination. And as several valuable comments in this discussion have highlighted, many of these capabilities are already being developed within the TM Forum ODA ecosystem.

So the question is no longer: do these functions exist at all? The more difficult question is: Are they explicit enough, at system level, to preserve coherence when many autonomous loops operate simultaneously, dynamically, and at scale? That is the real issue I am trying to explore.

In real operations, different autonomous loops may optimize for very different objectives at the same time:

· a RAN domain may optimize for energy saving

· a transport domain may optimize for resilience

· a core/service assurance loop may optimize for session stability

· a customer experience loop may prioritize low latency or QoE

Each of these can be valid. Each can be intelligent. Each can even be policy-compliant within its own operational scope. And yet, together, they may still create undesirable system behavior. This is where autonomy stops being just a matter of local optimization. It becomes a matter of trade-off reasoning, cross-domain conflict resolution, intent reconciliation, system-level operational judgment, … And that, in my view, is where the architectural gap begins to appear.

I fully agree with the view that ODA already contains many of the right ingredients: intents, events, autonomous domains, orchestration patterns, agent interaction mechanisms, governance frameworks, … But from an architectural clarity perspective, I think there is still an open question: When competing autonomous decisions arise across domains, where exactly does the reconciliation logic live?

Not just in theory, not just "somewhere in the architecture", but as a recognizable system-level function. Because if that function remains too distributed, too implicit, or too fragmented, then we may end up with strong local autonomy, rich AI-enabled loops, good intent exchange, …but still weak operational coherence at scale. And in practice, that is often where systems become fragile.

This is one reason I have been thinking about what I previously called OCS, and now more precisely as an Operational Cognition Layer (OCL). Not as a central controller, a super-orchestrator or a "manager of managers". But rather as an explicit architectural function responsible for cognitive reconciliation across autonomous domains.

In that role, OCL would not "run" every loop. Instead, it would help the system reason across loops when local autonomy creates system-level tension. Its role would be to make visible and governable things like competing intents, policy boundaries, operational priorities, trade-offs between domain objectives, cascading side effects across domains. So the point is not to reduce autonomy. The point is to make autonomy coherent, governable, and trustworthy when distributed intelligence begins to interact at scale.

Without this kind of explicit system-level cognition, Autonomous Networks may become very good at optimizing locally, but increasingly weak at behaving coherently end-to-end. That does not always show up as a dramatic failure. More often, it shows up as unstable optimization cycles, policy inconsistency, intent drift, cross-domain behavioral surprises, or an architecture that is "autonomous" but still difficult to trust operationally. And to me, that is one of the most important AN questions ahead: Not whether domains can become autonomous - but whether autonomy can remain coherent once it becomes distributed.

So perhaps the sharper architectural question is this: In Autonomous Networks, is intent exchange and policy coordination alone enough to preserve system coherence? Or do we need a more explicit Operational Cognition Layer to reconcile trade-offs across autonomous domains?

My current view is that many of the ingredients already exist - but what may still be missing is their explicit expression as a coherent architectural function. And that may matter more and more as we move toward higher-scale, higher-speed, cross-domain autonomy.

I would be very interested in how others see this.

Figure 2. Conceptual view of OCL as a cognitive reconciliation layer across competing autonomous loops.

Figure 2. Conceptual view of OCL as a cognitive reconciliation layer across competing autonomous loops.

#DigitalTransformationMaturity

------------------------------

Ngoc Linh Nguyen

Vietnam Posts and Telecommunications Group (VNPT)

------------------------------